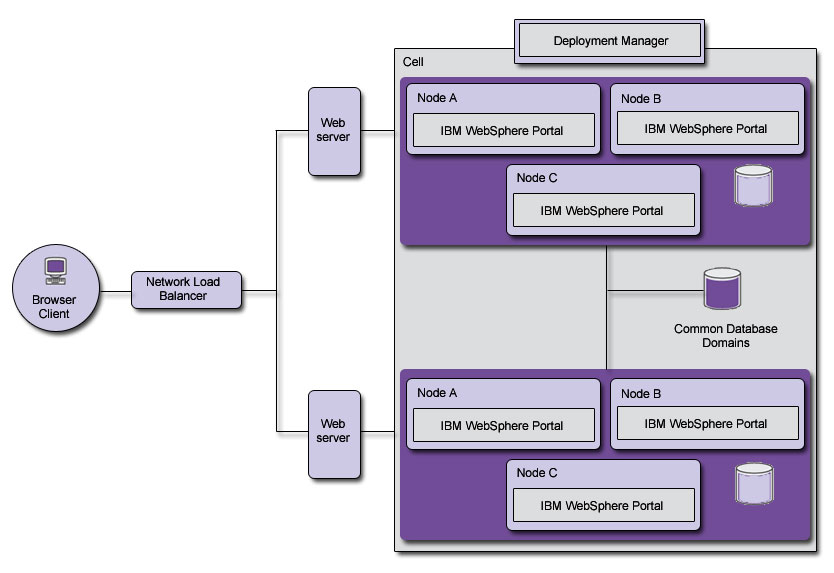

Multiple clusters

To improve server availability, failover and disaster recovery, as well as to help span large geographical deployments, it is best to consider deploying multiple portal clusters for the same portal site. With multiple clusters, one cluster can be taken out of production use, upgraded and tested while leaving other clusters in production.

This is the best means of achieving 100% availability without requiring maintenance windows. It is also possible to deploy clusters closer to the people they serve, improving the responsiveness of the content they provide.

When deploying multiple portal clusters, for the most part, each cluster should be seen as a totally isolated system. Each cluster is administered independently and has its own configuration, isolated from the other clusters. This improves the portal topology's ability to be maintained while protecting its high availability. The only exception to this rule is with the sharing of some of the portal database domains, the Community and Customization domains specifically, which are designed to be shared across multiple clusters presenting the same portal site. These domains store portal configuration data owned by the end users themselves, and so it is important to keep this data synchronized across all identical portal clusters. See Database for more information.

Each cluster can be deployed within the same data center, to help with improving maintainability and improve failure isolation, or across multiple data centers, to protect against natural disaster and data center failure, or to simply provide a broader geographical coverage of the portal site. The farther apart the clusters are, the higher the impact network latency may have between clusters and thus the less likely you will be to want to share the same physical database between clusters for the shared domains and will want to resort to database replication techniques to keep the databases synchronized.

Typically, in a multiple portal cluster topology, HTTP Servers are dedicated per cluster, since the HTTP Server plug-in's configuration is cell-specific. To route traffic between data centers (banks of HTTP Servers), a separate network load-balancing appliance is used, with rules in place to route users to specific datacenters, either based on locality or on random site selection, such as through DNS resolution. Domain, or locality, based data center selection is preferred because is predictably keeps the same user routed to the same datacenter, which helps preserve session affinity and optimum efficiency. Be mindful of the fact that DNS resolution based routing selection can cause random behavior in terms of suddenly routing users to another datacenter during a live session. If this happens, the user's experience with the portal site may be disrupted as the user is authenticated and attempts to resume at the last point in the new site. Session replication and/or proper use of portlet render parameters can help diminish this effect.

You can decide to adopt one of the following configurations with your multiple portal clusters:

- Active/active

- In this configuration, all portal clusters are receiving end-user traffic simultaneously. Network load balancers and HTTP Servers help keep the traffic balanced evenly across each server in each cluster. If maintenance on one cluster is required, all production traffic is switched to the other cluster. That means that the topology must be sized such that the remaining active clusters must be able to handle all production traffic while on cluster is undergoing maintenance, or that maintenance must be performed during off-peak hours.

- Active/passive

- In this configuration, under normal circumstances, all production traffic is routed to a subset of the available portal clusters (e.g. 1 of 2, or 2 of 3). There is always one cluster not receiving any traffic. Maintenance is typically applied first to the offline cluster, and then it is brought into production traffic while each of the remaining clusters are taken out and maintained in a similar fashion. This requires that a subset of the clusters be sized to handle all production traffic.

As an alternative to deploying multiple portal clusters where each cluster is in a different cell, it is also possible to deploy multiple portal clusters in the same cell. Different cells give you total isolation between clusters, and the freedom to maintain all aspects of each cluster without affecting the other. Different cells, however, require different Deployment Managers and thus different Administration Consoles for managing each cluster. Multiple clusters in the same cell reduces the administration efforts to a single console, but raises the effort level to maintain the clusters since there is a high degree of resource sharing between the multiple clusters.

While multiple portal clusters in a single cell has its uses, especially in consolidating content authoring and rendering servers for a single tier, it does increase the administrative complexity significantly. IBM recommends that multiple portal clusters be deployed in multiple cells, to keep administration as simple as possible. See Multiple portal clusters in a single cell for more details.

Parent topic:

Server topologiesRelated concepts

Single-server topology

Stand-alone server topology

Horizontal cluster topology

Vertical cluster topology

Combination of horizontal and vertical clusters

Single-server topology for Web Content Management

Dual-server configuration for Web Content Management

Staging-server topology for Web Content Management

Database

Related tasks

Install multiple clusters in a single cell on AIX

Install multiple clusters in a single cell on HP-UX

Install multiple clusters in a single cell on i5/OS

Install multiple clusters in a single cell on Linux

Install multiple clusters in a single cell on Solaris

Install multiple clusters in a single cell on Windows